Nn crossentropyloss

Learn the fundamentals of Data Science with this free course.

I am trying to compute the cross entropy loss of a given output of my network. Can anyone help me? I am really confused and tried almost everything I could imagined to be helpful. This is the code that i use to get the output of the last timestep. I don't know if there is a simpler solution. If it is, i'd like to know it. This is my forward.

Nn crossentropyloss

It is useful when training a classification problem with C classes. If provided, the optional argument weight should be a 1D Tensor assigning weight to each of the classes. This is particularly useful when you have an unbalanced training set. The input is expected to contain the unnormalized logits for each class which do not need to be positive or sum to 1, in general. The last being useful for higher dimension inputs, such as computing cross entropy loss per-pixel for 2D images. The unreduced i. If reduction is not 'none' default 'mean' , then. Probabilities for each class; useful when labels beyond a single class per minibatch item are required, such as for blended labels, label smoothing, etc. The performance of this criterion is generally better when target contains class indices, as this allows for optimized computation. Consider providing target as class probabilities only when a single class label per minibatch item is too restrictive. If given, has to be a Tensor of size C and floating point dtype. By default, the losses are averaged over each loss element in the batch.

FloatTensor, intbut expected int state, torch. Personalized Paths Get the right resources for your goals.

.

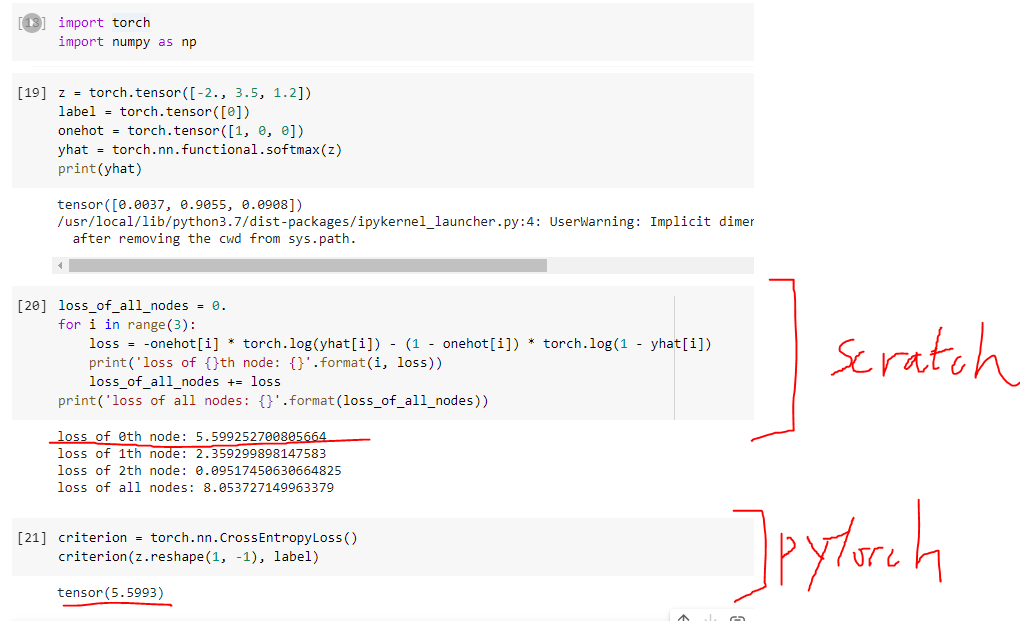

The cross-entropy loss function is an important criterion for evaluating multi-class classification models. This tutorial demystifies the cross-entropy loss function, by providing a comprehensive overview of its significance and implementation in deep learning. Loss functions are essential for guiding model training and enhancing the predictive accuracy of models. The cross-entropy loss function is a fundamental concept in classification tasks , especially in multi-class classification. The tool allows you to quantify the difference between predicted probabilities and the actual class labels.

Nn crossentropyloss

It is useful when training a classification problem with C classes. If provided, the optional argument weight should be a 1D Tensor assigning weight to each of the classes. This is particularly useful when you have an unbalanced training set. The input is expected to contain the unnormalized logits for each class which do not need to be positive or sum to 1, in general. The last being useful for higher dimension inputs, such as computing cross entropy loss per-pixel for 2D images. The unreduced i. If reduction is not 'none' default 'mean' , then. Probabilities for each class; useful when labels beyond a single class per minibatch item are required, such as for blended labels, label smoothing, etc.

Immersed synonym

If reduction is not 'none' default 'mean' , then. Table of Contents. The unreduced i. Webinars Sessions with our global developer community. If containing class probabilities, same shape as the input and each value should be between [ 0 , 1 ] [0, 1] [ 0 , 1 ]. Early Access Courses. Become an Affiliate. This is the code that i use to get the output of the last timestep. Example Let's implement all that we have learnt:. FloatTensor, int , but expected int state, torch. Line 2: We also import torch. Learn the fundamentals of Data Science with this free course. Skill Paths.

Introduction to PyTorch on YouTube.

LogSoftmax and nn. It is useful when training a classification problem with C classes. LongTensor target, torch. Machine Learning. Web Dev. FloatTensor input, torch. Did you find this helpful? Line Finally, we print the manually computed loss. Terms of Service. FloatTensor, bool, NoneType, torch. The nn. LongTensor [2, 5, 1, 9] target class indices. In PyTorch, the cross-entropy loss is implemented as the nn. The targets become a mixture of the original ground truth and a uniform distribution as described in Rethinking the Inception Architecture for Computer Vision. Careers Hiring.

0 thoughts on “Nn crossentropyloss”